Get notified of the latest news, insights, and upcoming industry events.

Enhancing Data Processing and Data Analyst Flexibility

The banking and financial services sectors are known to be data-heavy – market data messages observed at a tier-one bank by CJC almost doubled in less than 3 years. The sheer volume of market data means systematic processing and ongoing data management are compulsory for provisioning, entitlement usage and compliance reasons. This article provides insight into:

- Frequently used databases.

- The optimal method for complex data transformations.

- Supporting market data sources, databases and technologies.

Co-Written By: Antony Fung, Marketing Manager at CJC Ltd.

Frequently Used Databases

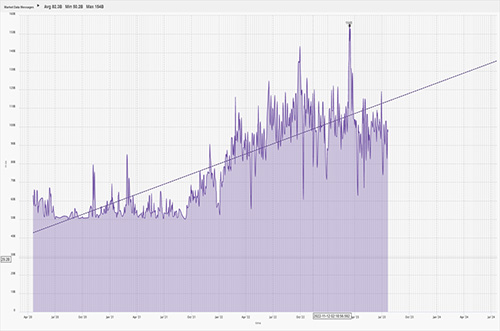

The concept of Big Data has indisputably been a game-changer in most (if not all) modern industries, with the speed of generating data continuing to grow. The financial and capital markets are no exception, with CJC’s observability and IT monitoring solution, mosaicOA, witnessed between April 2020 and July 2023 (below right). The observation is supported by multiple individuals, including Christopher Tozzi, adjunct research advisor for IDC, and Shruti Mohan, Senior Research Analyst at Simplilearn Solutions, who named the banking sector the most data-reliant industry.

Source: CJC - Market data messages observed via mosaicOA

As we can see from mosaicOA’s trend line (right), the volume of market data is progressively increasing – jumping from a peak of 80 billion market data messages in October 2020 to 154 billion by March 2023, almost double. Once processed for consumption, all this data is invaluable for financial firm business operations. The challenge is sourcing the necessary expertise in various types of databases, let alone being capable of supporting the underlying systems on a 24/7 basis.

SQL, MySQL, PostgreSQL, NoSQL, Sybase, and Oracle are just a few of the traditional databases currently under CJC’s management and are often used to store vital provisioning or entitlement usage data used by market data administration teams from systems such as LSEG Refinitiv DACS, EMRS, etc. Regarding market data storage, KX KDB+ and Google BigTable are popular choices for time series databases and the migration skillsets demanded by CJC clients.

Optimal Method for Complex Data Transformations

Historically, CJC has leveraged an ETL process (Extract-Transform-Load), an older method ideal for complex transformations of smaller data sets. As seen above, market data is no longer a small data set like it once was. CJC has subsequently leveraged ELT (Extract-Load-Transform) processes. Whilst the older ETL approach means data is already transformed (or ‘cooked’) before appearing in a rigid database, ELT loads the unstructured data into a flexible database where the parameters can be customised according to how the data scientist wants to focus on the granular data for ‘think outside the box’ analysis.

Another advantage of adopting ELT processes is that ETL adds computational overhead by transforming before loading, delaying delivery to the end user and adding rigidity to transformation methods that might be harder to adjust during production hours, as well as the risk of corrupting or losing data altogether. Also, ELT allows the user to process and analyse selective parts of a dataset that the end user is interested in, for example, the trade data in a specific timeframe - again speeding up the time required for the data to be delivered.

Another advantage of adopting ELT processes is that ETL adds computational overhead by transforming before loading, delaying delivery to the end user and adding rigidity to transformation methods that might be harder to adjust during production hours, as well as the risk of corrupting or losing data altogether. Also, ELT allows the user to process and analyse selective parts of a dataset that the end user is interested in, for example, the trade data in a specific timeframe - again speeding up the time required for the data to be delivered.

ELT provides greater flexibility to analysts and is better suited to processing structured and unstructured data.

CJC has a ‘cloud-first’ policy with Google Cloud (GCP) as our partner or choice. Our global teams are experts in advanced cloud tooling like Kubernetes and Google Bigtable, which is a time series database, as previously written, specifically focused by Google for financial data. CJC enables and accelerates the cloud adoption of complex workloads, including database-driven systems – in short, CJC’s technical team can reduce the timeframe of ETL from weeks to days or even hours.

Supporting Market Data Sources, Databases and Technologies

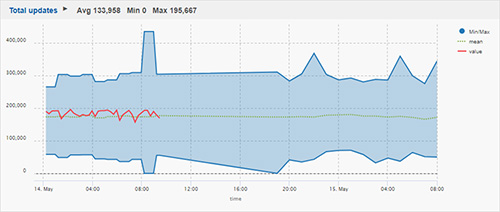

From an operational standpoint, a market data-fed time-series database must handle vast amounts of unconflated data from exchange/vendor sources. Every tick sent must be captured. On paper, databases like InfluxDB/KDB can experience hundreds of thousands of updates per second or billions of updates per day. To achieve this, CJC’s SRE engineers architect a resilient database schema from both a software and hardware perspective, whether on physical server technology or in a private/public cloud. The market data sources, databases and underlying technologies must all be resilient and robust to avoid data gaps.

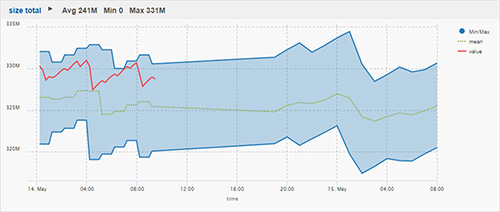

Once the system is set up, CJC has the expertise to support ongoing operations. This can be best demonstrated with visualisations from our time-series database-powered product, mosaicOA. Proactive monitoring is required to ensure the system is operating within tolerance limits. CJC can monitor exchange and market data feeds 24x7x365 to ensure seamless operations and avoid gaps.

The relationship between a market data source (publisher) and the database (subscriber) requires a messaging layer (pubSub). This could be RTDS, Solace, Kafka, or google pub/sub for market data.

Source: CJC - Visualising the number of updates through the database via mosaicOA. |

Source: CJC - Monitoring & visualising historic/present of time-series database using mosaicOA. |

CJC monitor thousands of database-related IT metrics, like the above, including the number of updates per second, write queues, and database size, to name a few. The IT metrics are indefinitely stored in a time-series database from a time-series database.

How CJC Can Help:

CJC is the leading market data technology consultancy and service provider for global financial markets. CJC provides multi-award-winning consultancy, managed services, cloud solutions, observability, and professional commercial management services for mission-critical market data systems. CJC is ISO 27001 certified, enabling CJC’s partners the freedom to focus on their core business.

For more information, contact us or:

Email: marketing@cjcit.com

Tel: +44(0) 203 328 7600

Get In Touch

Get in touch with our experts to learn how we can help you optimise

your market data ecosystem!